The AI cheating panic is loud. The way students actually use ChatGPT is much quieter.

After interviewing 50+ Cal Poly students, a clearer picture of AI use on campus emerges

This is a guest post by Parker Jones, a student at Cal Poly and ChatGPT Lab member who went above and beyond with a research project on campus—one we learned a lot from and are excited to share.

As a student, I use ChatGPT to help me learn and achieve more. Which is why it’s been so disorienting to watch the public narrative turn it into something unrecognizable — nothing more than a shortcut machine for cheaters.

Headlines prop up this panic, professors joke about hallucinations, leaders gloat about AI readiness … but on campus, no one actually talks about how students are or should be using this technology. In that silence, the loudest, most negative voices end up speaking for students and painting our relationship with it in the worst possible light. Yet when I look around at my own classes and friend groups, that picture just doesn’t match what I see.

If I’m wrong about my classmates, fine — but I want to hear it from them.

That’s why, over the past few months, I interviewed more than 50 Cal Poly students to understand how they use this technology and how they feel about it. What I found was far more ordinary and much more hopeful than the story we’ve been told about students and AI.

The headlines did get one thing right: almost every student is using ChatGPT, and they’re using it a lot. Most students use it weekly, if not daily, and describe it as an integral part of how they approach their work. I found that other AI tools aren’t prominent; for students at Cal Poly, AI is synonymous with ChatGPT.

Where the current narrative breaks down is in assuming that students are using ChatGPT to cheat. In reality, they’re using it in the most boring ways imaginable. A junior studying electrical engineering told me, “The best way I’ve heard Chat described for school is like 24-7 office hours.”

It’s true. Most of students’ use is that office-hours style: asking follow-up questions from a lecture, having it unpack assignment instructions, or getting guided help on a single homework problem.

Beyond that, they use it for a handful of more creative but equally grounded things: reviewing or editing writing, sanity-checking important emails, organizing study plans in their calendar, generating study materials before an exam, or pre-grading projects before they turn them in.

Students don’t think of ChatGPT in terms of “use cases,” though. It’s just become part of how they learn. They turn to it because they like having an always-available, nonjudgmental, infinitely patient tutor that can explain anything in the exact way they need.

Even with all this practical use, one thing weighs on students more than anything else: the fear of becoming overreliant. As one freshman in computer science put it, “I don’t use it in my Comp Sci 101 class to code; I think it’s important to understand the fundamentals when you’re learning something brand-new.” For him, ChatGPT is there to walk through concepts and explain tricky ideas when lectures don’t click, not to spit out solutions to the homework.

That attitude isn’t unusual. Students aren’t looking for the easy way out; they care about protecting their own learning. At Cal Poly, we “learn by doing,” and students understand that if you don’t do it, you don’t learn it.

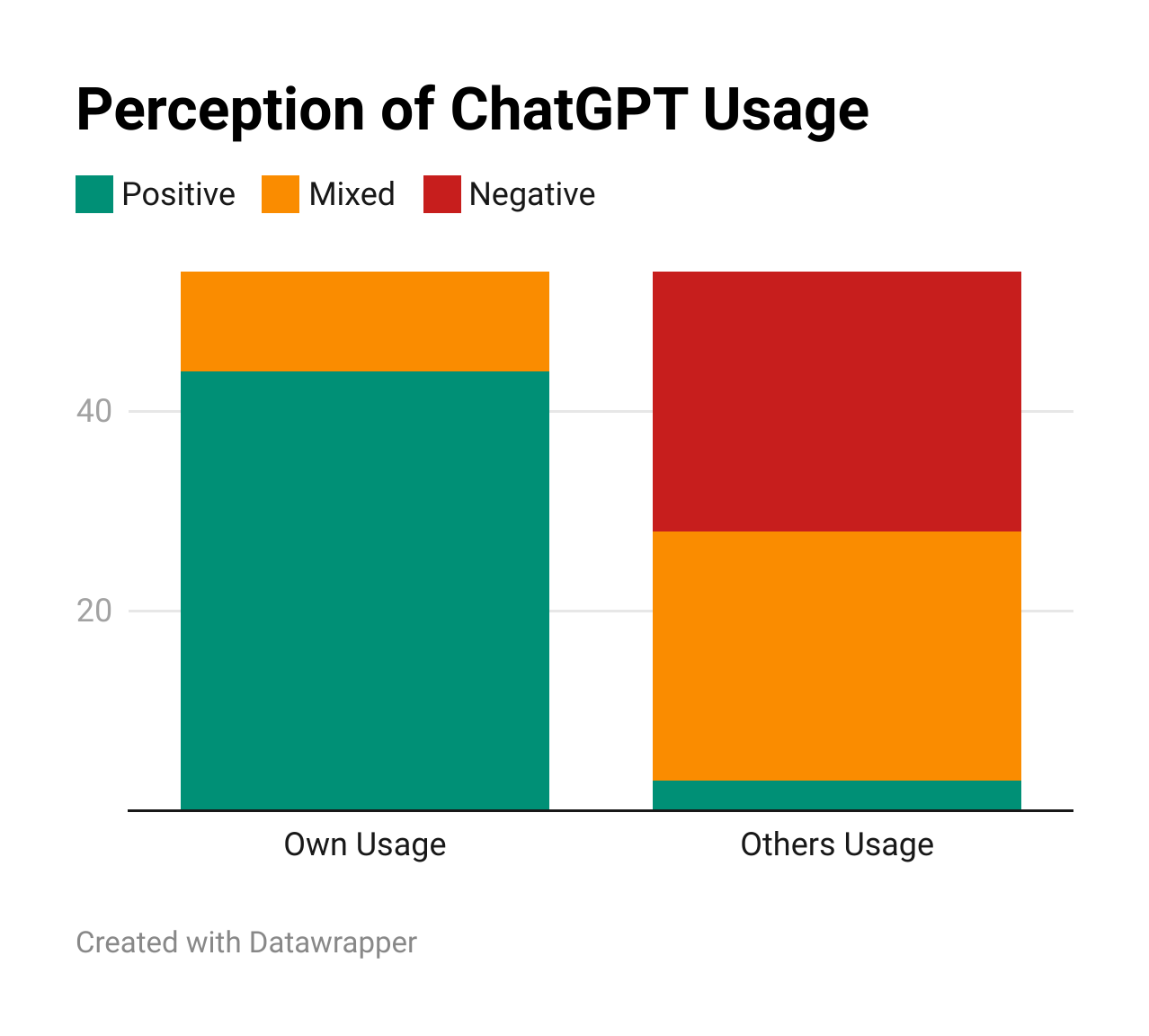

But there’s a catch: while most students trust their own use as responsible, they don’t feel the same about others. The chart below highlights this and shows how they rate their own usage versus that of a “generic” Cal Poly student.

Students trust what they see in their own circles — mostly boring, responsible use — but the second they zoom out to “everyone else,” the narrative takes over. It’s easier to believe the loud story you’ve been told than the quieter one you’re actually living.

Part of the problem is that no one is comparing notes. Students don’t tell their friends that ChatGPT helped them understand a confusing lecture at 2 a.m. So responsible use stays hidden, while the stereotype of the AI zombie spreads.

From these conversations, it’s obvious that most students are not using ChatGPT to get out of their work. They’re using it to get further into it. To stay curious. To push past confusion. To spend more time on the parts that genuinely interest them.

This is exactly the kind of learning universities should want to encourage. Yet they’re leaving it unsupported, still waiting for someone else to publish the instruction manual on AI.

The 19th-century English theologian John Henry Newman described a university as “a place for the communication and circulation of thought.” When it comes to AI, though, universities have facilitated a culture that tells students it’s not something to talk about.

This response reveals the crossroads universities are facing. They can choose to double down on being businesses — degree factories where you send checks, grind through requirements, pick up a diploma at the end, and then hope it still means something. On this path, AI will always be a threat to the bottom line.

Instead, they can choose to meet this moment and become something greater: spaces that foster collaboration, teach young people how to contribute to the communities they care about, and prepare students with the skills they need to be useful in the workforce and to the world. Why would AI be a threat to any of that?

I am hopeful that the best days for universities are ahead of us. Yet realizing them requires more than a new line in the syllabus; it calls on us to ask again what a university can be.

Students are longing for a better system of higher education, and many are ready to help envision it with AI. Universities now have to decide whether they will help build that future — or stay silent while it’s built without them.

Parker Jones is a Software Engineering and AI major at Cal Poly with a minor in Psychology, focused on designing better AI systems through insights from human psychology.

I hear ya, Parker. I teach high school history and am struck by how student work from advanced and struggling writers shows up error-free, coherent, and sophisticated. I don't think they are copying and pasting, well, a little. But I've asked a few to show me their work, just like in math. For those who admit they used GPT assistance, I ask that you send me the chat link, and we will discuss their queries and follow-up questions (the key) with the AI toward the final product. Great conversations with the AI are accepted. One question submission, ala "write three paragraphs on the causes of WWI," gets sent back for more detail on how they and the AI got to that submission.

The real educational crisis may not be that students use AI.

It may be that many educational systems were already rewarding performance over understanding long before AI arrived.

AI simply made the crack visible.